Videos peddling false claims about voter fraud and COVID-19 cures draw millions of views on YouTube. Partisan activist groups pretending to be online news sites set up shop on Facebook. Foreign trolls masquerade as U.S. activists on Instagram to sow divisions around the Black Lives Matter protests.

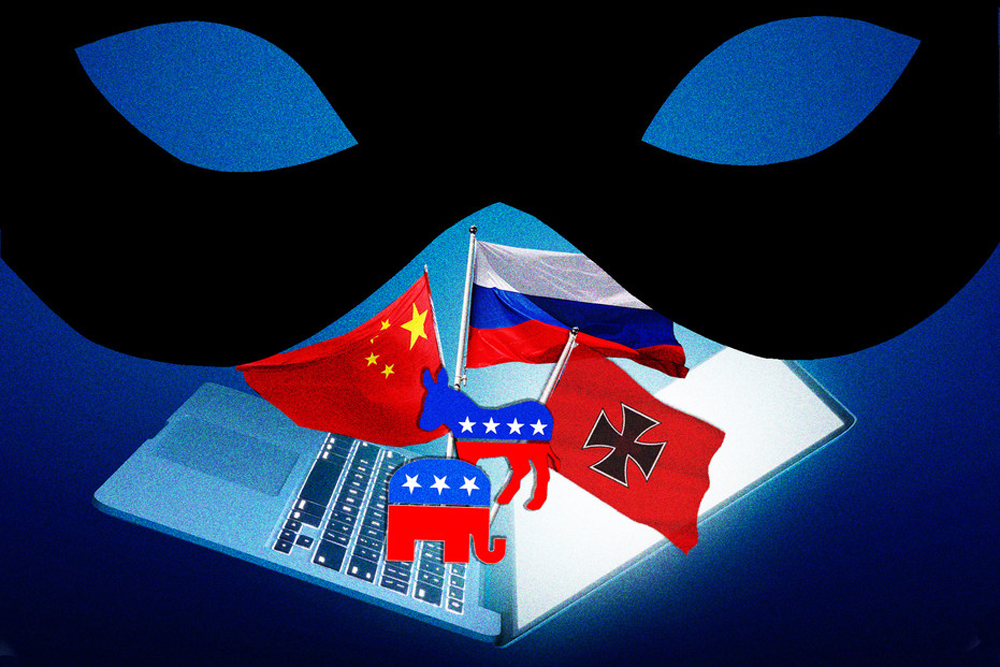

Four years after an election in which Russia and some far-right groups unleashed a wave of false, misleading and divisive online messages, Silicon Valley is losing the battle to eliminate online misinformation that could sway the vote in November.

Social media companies are struggling with an onslaught of deceptive and divisive messaging from political parties, foreign governments and hate groups as the months tick down to this years presidential election, according to more than two dozen national security policymakers, misinformation experts, hate speech researchers, fact-checking groups and tech executives, as well as a review of thousands of social media posts by POLITICO.

The tactics, many aimed at deepening divisions among Americans already traumatized by a deadly pandemic and record job losses, echo the Russian governments years-long efforts to stoke confusion before the U.S. 2016 presidential election, according to experts who study the spread of harmful content. But the attacks this time around are far more insidious and sophisticated — with harder-to-detect fakes, more countries pushing covert agendas and a flood American groups copying their methods.

And some of the deceptive messages have been amplified by mainstream news outlets and major U.S. political figures — including President Donald Trump. In one instance from last week, he used his large social media following to say, without evidence, that mail-in votes would create “the most inaccurate and fraudulent election in history.”

Since the 2016 election Facebook, Twitter and Google have collectively spent tens of millions of dollars on new technology and personnel to track online falsehoods and stop them from spreading.

Silicon Valleys efforts to contain the new forms of fakery have so far fallen short, researchers and some lawmakers say. And the challenges are only increasing.

“November is going to be like the Super Bowl of misinformation tactics,” said Graham Brookie, director of the D.C.-based Atlantic Councils Digital Forensics Lab, which tracks online falsehoods. “You name it, the U.S. election is going to have it.”

Anger at the social media giants inability to win the game of Whac-A-Mole against false information was a recurring theme at last weeks congressional hearing with big tech CEOs, where Facebook boss Mark Zuckerberg attempted to bat down complaints that his company is profiting from disinformation about the coronavirus pandemic. A prime example, House antitrust Chair David Cicilline (D-R.I.) said, was the five hours it took for Facebook to remove a Breitbart video falsely calling hydroxychloroquine a cure for COVID-19.

The post was viewed 20 million times and received more than 100,000 comments before it was taken down, Cicilline noted.

“Doesnt that suggest, Mr. Zuckerberg that your platform is so big that even with the right policies in place, you cant contain deadly content?” Cicilline asked.

The companies deny accusations they have failed to tackle misinformation, highlighting the efforts to take down and prevent false content, including posts about COVID-19 — a public health crisis that has become political.

Since the 2016 election Facebook, Twitter and Google have collectively spent tens of millions of dollars on new technology and personnel to track online falsehoods and stop them from spreading. Theyve issued policies against political ads that masquerade as regular content, updated internal rules on hate speech and removed millions of extremist and false posts so far this year. In July, Twitter banned thousands of accounts linked to the fringe QAnon conspiracy theory in the most sweeping action yet to stem its spread.

Google announced yet another effort Friday, saying it will begin penalizing websites on Sept. 1 that distribute hacked materials and advertisers who take part in coordinated misinformation campaigns. Had those policies been in place in 2016, advertisers wouldnt have been able to post screenshots of the stolen emails that Russian hackers had swiped from Hillary Clintons campaign.

But despite being some of the worlds wealthiest companies, the internet giants still cannot monitor everything that is posted on their global networks. The companies also disagree on the scope of the problem and how to fix it, giving the peddlers of misinformation an opportunity to poke for weaknesses in each platforms safeguards.

National flashpoints like the COVID-19 health crisis and Black Lives Matter movement have also given the disinformation artists more targets for sowing divisions.

The difficulties are substantial: foreign interference campaigns have evolved, domestic groups are copycatting those techniques and political campaigns have adapted their strategies.

Donald Trump and other Republicans accuse the companies of systematically censoring conservatives | Brendan Smialowski/AFP via Getty Images

At the same time, social media companies are being squeezed by partisan scrutiny in Washington that makes their judgment calls about what to leave up or remove even more politically fraught: Trump and other Republicans accuse the companies of systematically censoring conservatives, while Democrats lambast them for allowing too many falsehoods to circulate.

Researchers say its impossible to know how comprehensive the companies have been in removing bogus content because the platforms often put conditions on access to their data. Academics have had to sign non-disclosure agreements promising not to criticize the companies to gain access to that information, according to people who signed the documents and others who refused to do so.

Experts and policymakers warn the tactics will likely become even more advanced over the next few months, including the possible use of so-called deepfakes, or false videos created through artificial intelligence, to create realistic-looking footage that undermines the opposing side.

“As more data is accumulated, people are going to get better at manipulating communication to voters,” said Robby Mook, campaign manager for Hillary Clintons 2016 presidential bid and now a fellow at the Harvard Kennedy School.

Foreign interference campaigns evolve

Researcher Young Mie Kim was scrolling through Instagram in September when she came across a strangely familiar pattern of partisan posts across dozens of social media accounts.

Kim, a professor at the University of Wisconsin-Madison specializing in political communication on social media, noticed a number of the seemingly unrelated accounts using tactics favored by the Russia-linked Internet Research Agency, a group that U.S. national security agencies say carried out a multiyear misinformation effort aimed at disrupting the 2016 election — in part by stoking existing partisan hatred.

The new accounts, for example, pretended to be local activists or politicians and targeted their highly partisan messages at battleground states. One account, called “iowa.patriot,” attacked Elizabeth Warren. Another, “bernie.2020_,” accused Trump supporters of treason.

“It stood out immediately,” said Kim, who tracks covert Russian social media activity targeted at the U.S. “It was very prevalent.” Despite Facebooks efforts, it appeared the IRA was still active on the platform. Her hunch was later confirmed by Graphika, a social media analytics firm that provides independent analysis for Facebook.

The social networking giant has taken action on at least some of these covert campaigns. A few weeks after Kim found the posts, Facebook removed 50 IRA-run Instagram accounts with a total of nearly 250,000 online followers — including many of those she had spotted, according to Graphika.

In a spate of European votes — most notably last years European Parliament election and the 2017 Catalan independence referendum — Russian groups tried out new disinformation tactics that are now being deployed ahead of November.

“Were seeing a ramp up in enforcement,” Nathaniel Gleicher, Facebooks head of cybersecurity policy, told POLITICO, noting that the company removed about 50 networks of falsified accounts last year, compared with just one in 2017.

Since October, Facebook, Twitter and YouTube have removed at least 10 campaigns promoting false information involving accounts linked to authoritarian countries like Russia, Iran and China that had targeted people in the U.S., Europe and elsewhere, according to company statements.

But Kim said that Russias tactics in the U.S. are evolving more quickly than social media sites can identify and take down accounts. Facebook alone has 2.6 billion users — a gigantic universe for bad actors to hide in.

In 2016, the IRAs tactics were often unsophisticated, like buying Facebook ads in Russian rubles or producing crude, easily identifiable fakes of campaign logos.

This time, Kim said, the groups accounts are operating at a higher level: they have become better at impersonating both candidates and parties; theyve moved from creating fake advocacy groups to impersonating actual organizations; and theyre using more seemingly nonpolitical and commercial accounts to broaden their appeal online without raising red flags to the platforms.

Moscow piggybacked on hashtags related to the COVID-19 pandemic and recent Black Lives Matter protests | Yuri Kadobnov/AFP via Getty Images

The Kremlin has already honed these new approaches abroad. In a spate of European votes — most notably last years European Parliament election and the 2017 Catalan independence referendum — Russian groups tried out new disinformation tactics that are now being deployed ahead of November, according to three policymakers from the EU and NATO who were involved in those analyses.

Kim said one likely reason for foreign governments to impersonate legitimate U.S. groups is that the social media companies are reluctant to police domestic political activism. While foreign interference in elections is illegal under U.S. law, the companies are on shakier ground if they take down posts or accounts put up by Americans.

Facebooks Gleicher said his team of misinformation experts has been cautious about moving against U.S. accounts that post about the upcoming election because they do not want to limit users freedom of expression. When Facebook has taken down accounts, he said, it was because they misrepresented themselves, not because of what they posted.

Still, most forms of online political speech face only limited restrictions on the networks, according to the POLITICO review of posts. In invite-only groups on Facebook, YouTube channels with hundreds of thousands of views, and Twitter messages that have been shared by tens of thousands of people, partisan — often outright false — messages are shared widely by those interested in the outcome of Novembers vote.

Russia has also become more brazen in how it uses state-backed media outlets — as has China, whose presence on Western social media has skyrocketed since last years Hong Kong protests. Both Russias RT and Chinas CGTN television operations have made use of their large social media followings to spread false information and divisive messages.

Moscow- and Beijing-backed media have piggybacked on hashtags related to the COVID-19 pandemic and recent Black Lives Matter protests to flood Facebook, Twitter and YouTube with content stoking racial and political divisions.

China has been particularly aggressive, with high-profile officials and ambassadorial accounts promoting conspiracy theories, mostly on Twitter, that the U.S. had created the coronavirus as a secret bioweapon.

Twitter eventually placed fact-checking disclaimers on several posts by Lijian Zhao, a spokesperson for the Chinese foreign ministry with more than 725,000 followers, who pushed that falsehood. But by then, the tweets had been shared thousands of times as the outbreak surged this spring.

“Russia is doing right now what Russia always does,” said Bret Schafer, a media and digital disinformation fellow at the German Marshall Fund of the United States Alliance for Securing Democracy, a Washington think tank. “But its the first time weve seen China fully engaged in a narrative battle that doesnt directly affect Chinese interests.”

Other countries, including Iran and Saudi Arabia, similarly have upped their misinformation activity aimed at the U.S. over the last six months, according to two national security policymakers and a misinformation analyst, all of whom spoke on the condition of anonymity because of the sensitivity of their work.

Domestic extremist groups copycatting

U.S. groups have watched the foreign actors succeed in peddling falsehoods online, and followed suit.

Misinformation experts say that since 2016, far-right and white supremacist activists have begun to mimick the Kremlins strategies as they stoke division and push political messages to millions of social media users.

“By volume and engagement, domestic misinformation is the more widespread phenomenon. Its not close,” said Emerson Brooking, a resident fellow at the Atlantic Councils Digital Forensic Research Lab.

Early this year, for instance, posts from “Western News Today” — a Facebook page portraying itself as a media outlet — started sharing racist links to content from VDARE, a website that the Southern Poverty Law Center had defined as promoting anti-immigration hate speech.

Other accounts followed within minutes, posting the same racist content and linking to VDARE and other far-right groups across multiple pages — a coordinated action that Graphika said mimicked the tactics of Russias IRA.

Google and Facebook now require political advertisers around the world to prove their identities before purchasing messages.

Previously, many of these hate groups had shared posts directly from their own social media accounts but received little, if any traction. Now, by impersonating others, they could spread their messages beyond their far-right online bubbles, said Chloe Colliver, head of the digital research unit at the Institute for Strategic Dialogue, a London-based think tank that tracks online hate speech.

And by pretending to be different online groups with little if any connection to each other, the groups posting VDARE messages appeared to avoid getting flagged as a coordinated campaign, according to Graphika.

Eventually, Facebook removed the accounts — along with others associated with the QAnon movement, an online conspiracy theory that portrays Trump as doing battle with elite pedophiles and a liberal “deep state.”

The company stressed that the takedowns were directed at misrepresentation, not at right-wing ideology. But Colliver said those distinctions have become more difficult to make: The tactics of far-right groups have become increasingly sophisticated, hampering efforts to tell who is driving these online political campaigns.

“The biggest fault line is how to label foreign versus domestic, state versus non-state content,” she said.

In addition to targeted takedowns, tech companies have adopted broader policies to combat misinformation. Facebook, Twitter and YouTube have banned what they call manipulated media, for instance, to try to curtail deepfakes. Theyve also taken broad swipes at voting-related misinformation by banning content that deceives people about how and when to vote, and by promoting authoritative sources of information on voting.

While the social networks policies have caused political ads to become more transparent than in 2016, many partisan ads still run without disclaimers, often for weeks.

“Elections are different now and so are we,” said Kevin McAlister, a Facebook spokesperson. “Weve created new products, partnerships, and policies to make sure this election is secure, but were in an ongoing race with foreign and domestic actors who evolve their tactics as we shore up our defenses.”

“We will continue to collaborate with law enforcement and industry peers to protect the integrity of our elections,” Google said in a statement.

Twitter trials scenarios to anticipate what misinformation might crop up in future election cycles, the company says, learning from each election since the 2016 race in the U.S. and tweaking its platform as a result.

“Its always an election year on Twitter — we are a global service and our decisions reflect that,” said Jessica Herrera-Flanigan, vice president of public policy for the Americas.

Critics have said those policies are undermined by uneven enforcement. Political leaders get a pass on misleading posts that would be flagged or removed from other users, they argue, though Twitter in particular has become more aggressive in taking action on such posts.

Political campaigns learn and adapt

Its not just online extremists improving their tactics. U.S. political groups also keep finding ways to get around the sites efforts to force transparency in political advertising.

Following the 2016 vote, the companies created databases of political ads and who paid for them to make it clear when voters were targeted with partisan messaging. Google and Facebook now require political advertisers around the world to prove their identities before purchasing messages. ThRead More – Source

[contf] [contfnew]

politico

[contfnewc] [contfnewc]